Everyone is a manager now

AI just made everyone a first-time manager. The question isn't whether you're good at the work. The question is whether you can encode what "good" means so the work ships without you.

The hardest transition in any career just became mandatory for everyone using AI.

Linda Hill, a Harvard Business School professor who studied first-time managers for over three decades, found the skills that make you a great individual contributor are "starkly different" from the skills that make you effective as a manager. New managers must learn to strike the right balance between delegation and control.

For most knowledge workers, this transition is optional. They remain individual contributors and go on to have successful careers. But AI changes that equation. In a world where everyone can generate anything with agents and prompts, everyone needs to become, in effect, a manager to level up. They need to coordinate work, set the quality bar, and build the gates that enforce it.

They need to turn their judgment into systems that scale.

AI just made everyone a first-time manager.

The AI agent review bottleneck

I remember my own transition. At Yodle, I went from Senior Product Manager to Director of Product Marketing. The first six months were rough. I refined my approach over the next decade, eventually leading global, remote marketing teams at well-funded SaaS startups. I never expected to hit the same wall again as a bootstrapped solopreneur building OnePerfectSlice.

Last Thursday afternoon, I had five Claude Code sessions running in parallel: three focused on software development (scoping, speccing, and coding) and two focused on content (LinkedIn posts and blog posts). I thought I was being productive. Then I hit that wall. I had generated far more outputs than I thought possible, and nothing could move forward without my input. I was context switching across domains in a terminal and markdown files in the same thirty-minute block.

I've started calling this wall review debt: the backlog of unverified decisions that accumulates when your output exceeds your ability to judge it. My ability to spin up agents and implement workflows, the very things that made me faster, was now making me slower.

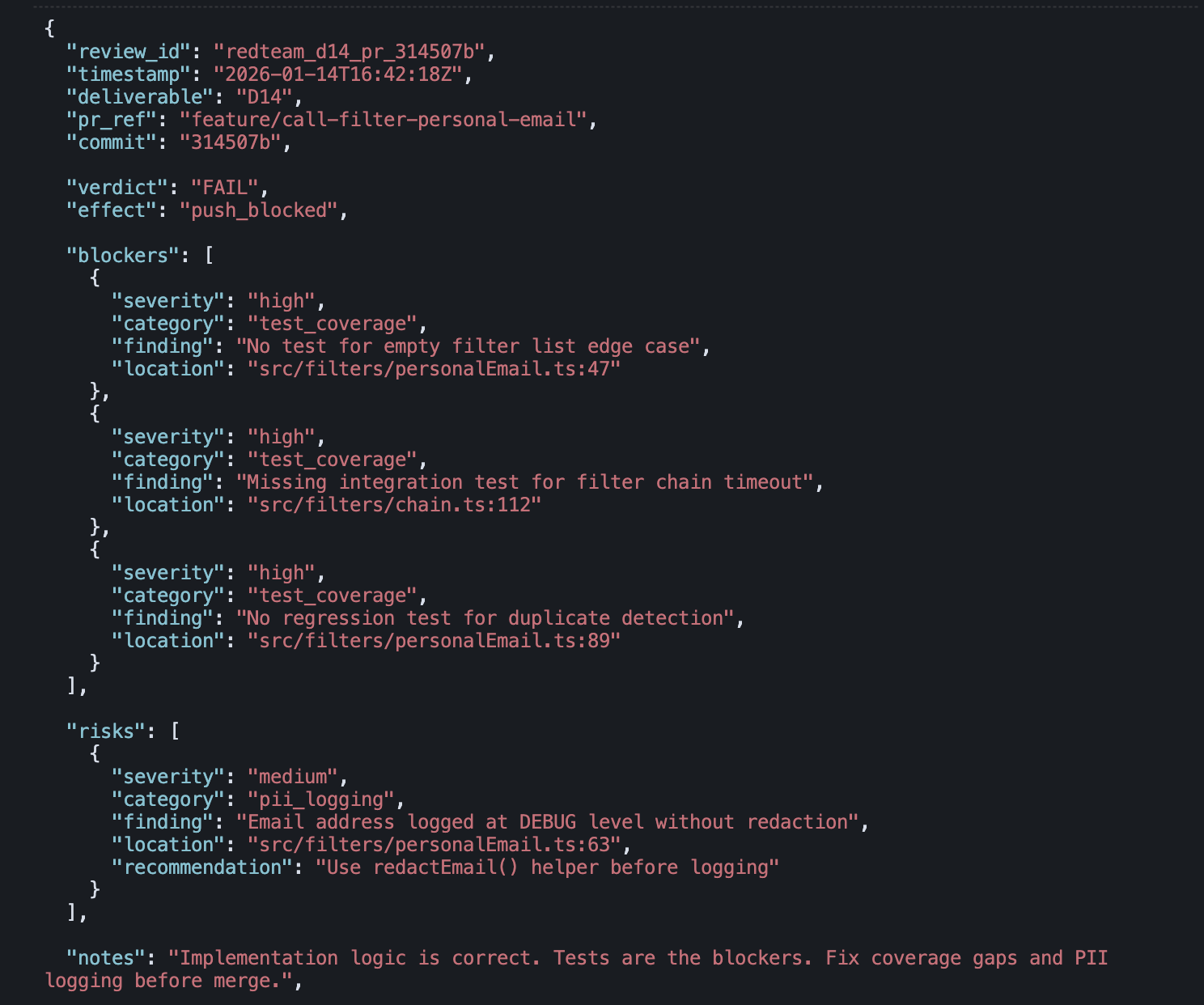

What finally snapped me out of it was my approach to automating both spec and code review. A review marker from that process literally blocked a push. FAIL. Three missing tests. One PII logging risk. The gate didn't need my eyes on it, or on every line of code. It caught the problem without me. It needed to know what to do in that situation without me. Not just this time, but every time it encountered something similar.

That's when I realized I'd been solving the wrong problem.

As I pushed more on AI, I needed to stop being in the loop unless I needed to be.

Making and delegating decisions to agents

This is where Hill's research on first-time managers became directly applicable. In Becoming a Manager, she presents a framework for how leaders make decisions:

- Autonomous: I decide alone.

- Consultative: I decide after getting advice.

- Joint: We decide together. Decisions aren't finalized until both sides actively support it.

- Delegated: The team decides within parameters I set.

The way out was to move routine decisions into delegated mode by encoding as much as I could into two files: a conventions.md file and a principles.md file. The conventions file captures the rules: patterns to follow, gotchas to avoid, code standards to enforce. The principles file captures the judgment: what to optimize for, when to deviate, how to handle tradeoffs.

The more I could encode in those two files, the more likely the agents could operate without me.

Giving AI agents more autonomy

The fix isn't new. Organizations solved the manager bottleneck and created decision-making frameworks decades ago. We have escalation paths to the Finance department for expenses. We have PRs for code review before we merge code from a feature branch.

So what's new? Three things:

- Capturing the context (the "why" and "how") so agents can reference it.

- Updating conventions deliberately as new decisions come up, so agents know when it's safe to proceed without explicit human review.

- Blocking progress without a documented decision trace (passed because..., failed because..., pass with risks because...).

The system doesn't prevent all mistakes. It catches them and turns them into learning.

Examples: Claude Code kept building modals on the frontend of OnePerfectSlice that didn't conform to our existing patterns. Having fixed it for a third time, I decided I should probably add it to my conventions.md file. With that in place, the same mistake didn't happen again. The FAIL marker from my Thursday story works the same way. The implementation logic was correct. But the tests weren't there, and the gate caught it. Push blocked. No rubber-stamping required. The system enforced the standard I'd set, without me.

Every time I fix the work manually, I'm creating more review debt for myself. The same mistake will happen tomorrow. When I take the time to document the decision, the conventions, and when to escalate, I can handle more than before and therefore move even faster. So now when I'm starting a new body of work, I think process, stages, gates, review, principles and conventions files for everything. And if I'm not happy with the output, I fix the system.

Managing AI like a first-time manager

Hill found that first-time managers have to unlearn the instinct to do the work themselves. The ones who make it measure themselves differently: not by what they produce, but by what their system produces without them.

That's the transition AI is forcing on everyone now. The question isn't whether you're good at the work. The question is whether you can encode what "good" means so the work ships without you.