Content Engineering: an attempt at solving AI Slop, with AI

I can run 3+ dev agents through a Linear backlog. My content pipeline still ends with me, a printed draft, and a ballpoint pen. Here's what I built to close the gap.

I can run 3+ autonomous dev agents through a Linear backlog. They pick up issues assigned to them, write code, pass tests, and merge PRs without me touching a single line. Today, my content workflow still ends with me, a printed draft, and a ballpoint pen.

In my attempt to treat content as an engineering problem, I discovered that writing great content is materially more difficult than writing clean code. Code has clear definitions of success, and a means to validate the outputs. Content by comparison still has “vibes.”

The system I built currently gets me 60% there, but the last 40% is voice, the flow of the article, the sentence structure, the choice of “workflow” over “process” and other intangibles that I haven't been able to extract into a markdown file yet.

Here's what I built to get that 60%, and what you can try on your own.

Why content is harder than code

Unlike content, code has clear definitions of done that make autonomy possible. Tests pass or fail. The build compiles or it doesn't. When I run dev agents through Linear, they can operate autonomously because "good" has a boolean: green checkmarks, merged PRs, shipped releases. The agents don't need my judgment because code standards, architecture guidelines, and hard requirements can all be externalized.

Content has no corresponding evals. "Does this capture what I am trying to communicate?" is not a function I can call. I know it when I read it, but I can't specify it in advance.

So I tried to engineer around it. Four versions later, and I am still printing drafts by hand, marking them up with a pen, and editing them in a Google Doc.

v1.0: Dump Thoughts and Draft

My first approach was stupidly simple: dump thoughts into a doc, give Claude a well-defined prompt, review the draft. Every draft was technically proficient enough to be legible but failed to engage, evoke emotion, or offer distilled insight. They all felt dead.

A recent comment I left on LinkedIn captured what was happening...

"But here's the thing. Another example of AI killing originality. Practically every post I read has that phrase."

That comment got 21,000 impressions, 26 likes, and 9 replies. Someone tagged #TeamHuman and wrote "AI is favoring AI."I wasn't just seeing it in other people's content. I was also, in my attempt to do more, producing it. After enough dead drafts, I ran an autopsy and catalogued the tells that are instantly recognizable:

- Hedge phrases. "It's worth noting that..." "One might argue..."

- Sterile symmetry. Every section is roughly the same length, every paragraph has roughly the same structure.

- Faux-authority. "Experts agree..." "Studies show..." — without naming any expert or citing any study.

So I kept rewriting prompts thinking the instructions were the problem. Then I realized that, much like AI-driven software development, I needed to fix the inputs if I wanted to get better outputs.

v2.0: Introducing a Content Brief

After v1 failed repeatedly, I added structure to the content workflow. Not prompting structure (telling the AI how to write) but pipeline structure (deciding what to write before involving the AI). My updated flow became: dump thoughts, produce a brief, write the draft. I also defined what a “Content Brief” was.

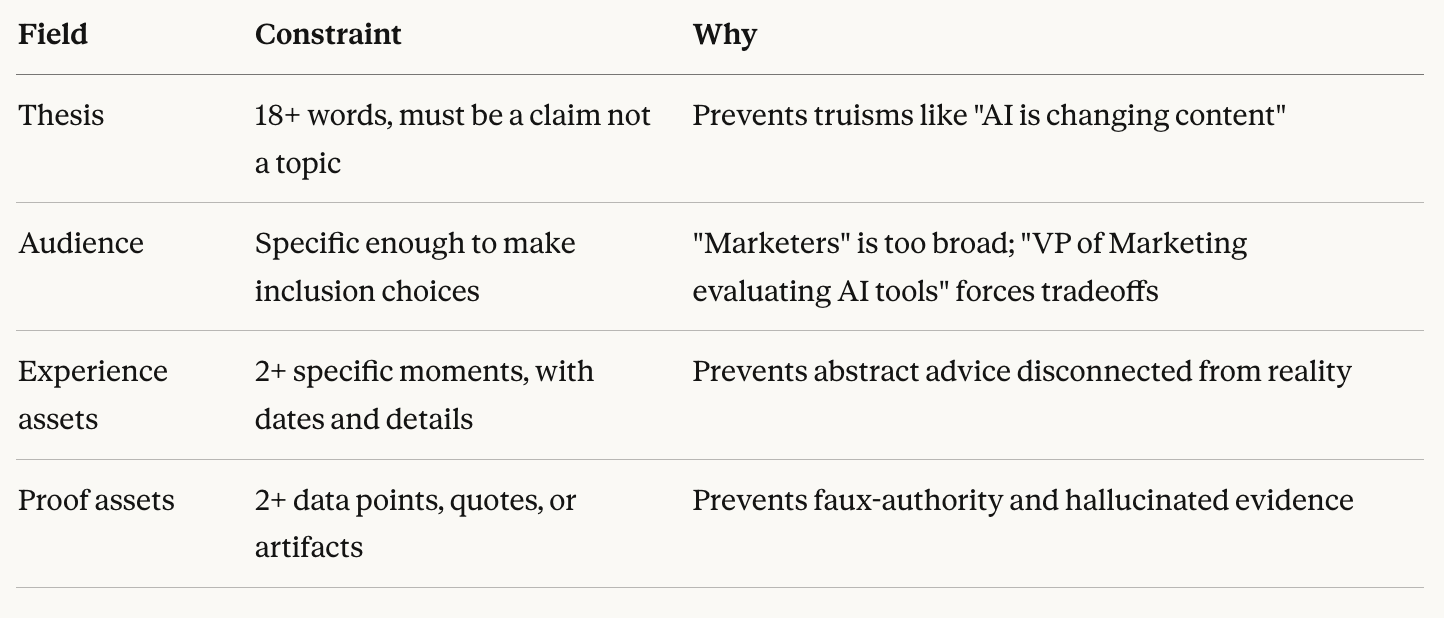

Evals for a Content Brief

And finally, I told it if we can’t fill out a brief with the dumped materials, we don’t have enough to produce a draft.

I looked at a few Content Briefs and thought, “yes, this will be 1000x better.” Spoiler alert, it wasn’t. Introducing a brief fixed a few problems - drawing from my lived experience, referencing assets, using language closer to how the audience actually talks - but the title didn’t match the opening, and the opening didn’t flow with the rest of the article.

v3.0: Adding a clear outline

In 11th grade, for both English and AP American History, I had to write independent papers of at least ten pages. I remember two things from that work: I spent five times more time on the outline than the draft, and I was happy with the output.

So I introduced an outline stage between the brief and the draft, for long form content.

The brief caught material and argument gaps: do I actually have enough to write this? The outline caught argument drift: does this hold together as a piece? They solved different problems. A strong brief with no outline gave me, and correspondingly gave Claude, a good direction (like a good spec in software development). The outline forced a through-line (sort of like acceptance criteria).

Despite the improvements from outlines, openings still felt consistently flat. But this was real progress. I didn’t have to rewrite the entire article. I spent most of my time reworking the title and the opening.

v4: Improving outlines with opening arguments

To solve this problem, I borrowed from speechwriting. Speakers don't have two paragraphs to earn attention: they have 30 seconds before the audience mentally checks out. So I catalogued seven opening types that professional speechwriters use, and I require each outline to specify which one the draft should use:

- Story: Drop into a specific moment with sensory detail, where the reader experiences something rather than being told about it

- Provocative Question: Create an information gap that demands resolution, making the reader need to know the answer

- Bold Statement: Make a claim that demands proof, which is the opening you're reading in this post

- In Media Res: Start mid-action with no setup, trusting the reader to catch up

- Quote: Borrow authority from someone credible, or set up a quote you'll contradict

- Imagine: Put the reader in a vivid scenario, making an abstract problem concrete.

- Shocking Statistic: Force recalibration with a number that surprises, creating immediate interest

Each title type has natural opening pairings that work together. A "Mistake" title (like "The Mistake I Made With AI Agents") pairs naturally with Story or In Media Res because the reader expects to see the mistake happen. A "Shift" title (like this post) pairs with Bold Statement because you're making a claim about how things have changed. The frame is set before drafting begins, so the AI knows what kind of opening to write.

This was the highest-leverage fix. My next questions became: how much better is the AI draft getting at being closer to final?

Tracking improvements with each post

This system gets me 60% of the way to a publishable draft. I was able to cut my editorial and revision time from three hours to about one. The last 40% still elude me, perhaps it’s because I haven't published enough posts to tune the system.

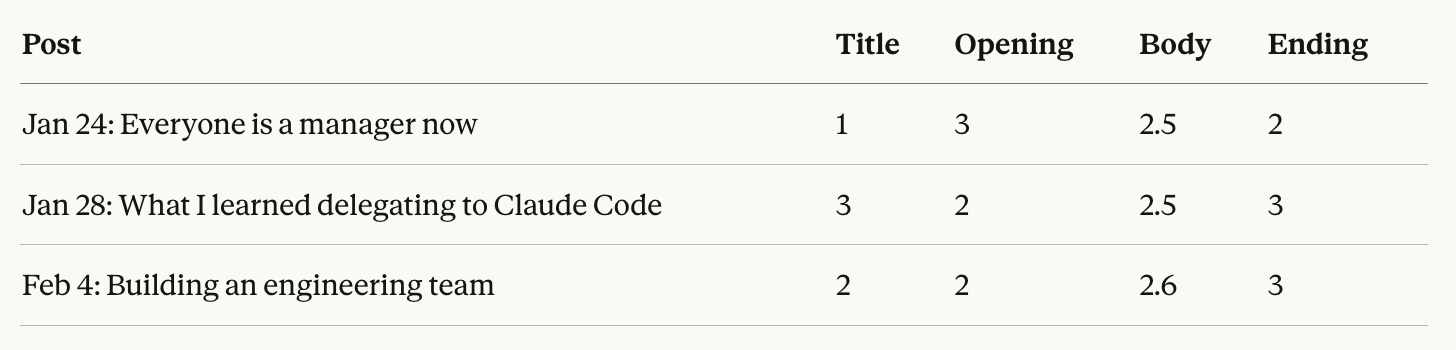

In the meantime, I track edit intensity on a 0–3 scale for each section of every post: 0 means unchanged from the AI draft, 1 means light edits, 2 means significant revision, 3 means rewritten entirely.

While I am still rewriting a lot, the time to edit has decreased. And I believe the system and learning will compound. Patterns that repeat will become rules:

- "Mysterious titles don't land for synthesis posts" — learned from Jan 28 where I changed the title entirely. Now enforced in the brief template.

- "Mapping tables get cut in favor of narrative" — learned from Jan 24 where a table was deleted. Now avoided during outlining.

- "Heavy proof gets cut: story wins over framework" — learned from Feb 4 where instructional content was removed. Now scoped out explicitly.

The 40% that's left is still what it was at the start: voice, rhythm, the choice of one word over another. The system hasn't solved that. I am trying solve for it, but I am not sure if I should.

Trying it yourself

You don't need the full system to give this a go. Start with whatever gate would catch the most problems in your current workflow.

- Write a brief before your next AI draft. Thesis, two experience assets, two proof assets. If you can't fill it out with real material, you don't have a post yet — and any draft you generate will need rescue, not refinement.

- Outline before you draft, and red-team the outline. Use a second model to look for thesis weakness, logic holes, and claims without evidence. The adversarial review catches what you can't see because you're too close to your own thinking.

- Pick an opening type before drafting. Constrain the first 10% and the rest stops defaulting to "In today's fast-paced world."

- Then track what you change. When the same edit shows up three times, make it a rule.

That's the loop. It's how the system gets better without you redesigning it.