Building an engineering team with Claude Code & Linear

If you removed the people from an engineering team, what would actually be left?

Not the codebase. Not the PRs. Not the incident postmortems. Not the shipped releases. What’s left is the operating system: the roles, the rituals, the review standards, the definition of done, and the escalation paths that keep quality high as throughput rises.

Most AI use cases I’ve come across try to automate execution without rebuilding that scaffolding. The work focuses on generating code (aka vibe coding) and ignores the choreography that makes software production, and I would argue any knowledge work, both high quality and scalable: how work is shaped, reviewed, tested, merged, released, and supported.

In this post, “team” means the operational structure a team runs on: roles, workflows, quality bars, and escalation paths, not headcount. If you can externalize that structure in markdown plus a project system (Linear, Jira, Trello), and enforce it with quality gates (think evals plus automated checks), you can build virtual teams with AI.

That’s the thesis.

It came out of the last two months building OnePerfectSlice.

By the end, I had six agents running in parallel against a shared Linear backlog, operating while I slept.

Here’s how I got there.

High-Performing engineering teams are systems

Before talking about AI, it helps to be explicit about what a high-performing engineering team actually does well.

From my vantage point, a functioning team does four things.

First, it allocates responsibility. Work is broken into roles with clear ownership so decisions get made by the right people, close to the relevant slice of the system. Second, it creates flow. Work moves through a shared workflow with defined stages and handoffs so nothing falls through the cracks and “done” means the same thing at each step. Third, it enforces judgment. The team applies consistent standards so the output is coherent, reviewable, and traceable back to a rationale. Fourth, it escalates risk. When something is novel, high-impact, or hard to reverse, it gets routed to higher scrutiny instead of being treated like routine work.

Most of this is invisible. Consistent quality and business impact are the by-products.

So when you ask why a codebase is consistent, nobody points to a single document. They point to the system that enforces it: the PR checklist, the code review standards, the test gates in CI, the linters and formatting rules, the incident review process, and the unwritten expectations that “this will not ship without X.” In other words, they point to a mesh of artifacts and habits that collectively define how the team works.

Pair Programming With AI, and Its Ceiling

Six months ago my workflow was classic ChatGPT pair programming. I would break down a task in chat, paste in context, copy code into the repo, then integrate and test in the IDE.

It worked, but it did not compound. I reviewed everything, caught edge cases, re-explained patterns I had already explained, and made judgment calls on every change. The context window was my only memory. If a decision wasn't in the immediate prompt, it didn't exist. I was re-litigating the same architectural choices. Each new chat meant re-stating the codebase structure, re-sharing common utilities, and re-stating why we do things a certain way. The productivity gains were real, but they were additive rather than multiplicative. I was getting help, but I wasn’t getting leverage.

Three months ago I shifted to Claude Code and the experience changed. I started abstracting development into repeatable slash commands and deliverables. My capability jumped dramatically. I could take on heavier work like third-party integrations without having to repeat myself.

Then I hit the next ceiling.

I was running one agent, one step at a time. I would kick off a command, wait for completion, answer clarifying questions, and iterate. The bottlenecks were the blocking questions inside the process and my inability to run multiple lanes of work in parallel. I felt like I was operating a machine, pushing the same buttons to keep it humming. I spent a lot of time waiting, coffee in hand, watching runs complete. I had swapped the frustration of syntax for the boredom of orchestration.

That’s when I realized I needed to move from a single operator to a development team. I needed the things a team normally supplies around execution: shared process, shared standards, decision making frameworks, escalation paths, and a way to distribute work without routing everything through me.

So I did what any competent manager does: I rebuilt the process so it could scale.

My path to building an AI engineering team

I came into this with scar tissue from my time as a Product Manager, and from leading teams outside engineering. I’m used to treating output quality as a function of roles, workflow, and gates, not individual heroics.

The real failure mode I kept hitting wasn’t “Claude can’t code.” It was that I still had a single-operator system. Every task depended on my judgment, my memory, and my attention. That doesn’t scale. So instead of trying to get better at prompting, I did the thing a competent manager does when a team can’t scale: I made the operating system explicit.

That meant two moves:

- write down the process so it could be repeated without me, and

- enforce it so quality could be independently verified.

Phase 2: Process and Enforcement I stopped writing prompts and started writing playbooks.

Eventually, my /spec command became 847 lines long. That length wasn’t overhead—it was the mechanism that made the output reliable. Each failure revealed an assumption I’d been carrying implicitly and forced me to write it down: how we name things, when to split files, which error-handling patterns we use, when to ask for clarification versus proceeding with a reasonable default.

The version numbers tell the story of iteration: code.md is at v5.7. specify.md is at v3.9. Those versions are the residue of dozens of iterations where something didn’t work and I had to make the rule explicit. Claude reads these playbook files at the start of every session, which means the playbooks are the process. To change behavior, I just change the files.

I coupled the playbooks with a red-team review at the end of each step of the process. That addition was the difference between “please follow the process” and “the process is enforced."

This was now an executable system. When I update specify.md to add a new validation check, the next spec run picks it up automatically. And it comes with no retraining required. No “did everyone see the update?” tax.

Then I took the next step.

Phase 3: Parallelism as a Queue

Once the playbooks were explicit and the quality bar was enforced, the bottleneck stopped being correctness and became orchestration: how do I run multiple agents without turning the repo into a crime scene?

The answer was to move orchestration to Linear, the home of the Product backlog. Every piece of work lives as an issue with a title, a spec, acceptance criteria, and a current status: backlog→ spec'd→ ready → in-progress → in-review → merged. It created a single source of truth for handoffs, progress, and decisions. I basically replicated what I used to look at when I was a Product Manager, but adapted it to better fit spec-driven development.

Once the work had a canonical home, issues became the unit of work and the phases became the workflow. Then labels became routing. I assigned issues to different agents: agent:astoria, agent:boston, agent:chicago and constrained each agent to only pull issues it was assigned. If I need more development capacity, I basically just instantiate a new agent.

To make parallel execution safe, I used git worktrees. Each agent operates in its own isolated directory with its own branch state and dependencies, so concurrent work didn’t collide. Linear handled coordination and sequencing; worktrees handled isolation; the playbooks handled consistency.

Then I put the whole thing in a loop.

This is the Raph Wiggum principle: you don’t “run an agent,” you set up a simple repeating cycle—check the queue, do the next thing, report back, repeat—and let the system produce throughput over time. A team is just a set of these loops operating against a shared backlog with shared standards.

In a traditional startup, scaling from one developer to six takes months of recruiting and onboarding. In this system, “scaling the team” meant spinning up new worktrees and adding more labeled lanes in Linear. I went from one developer to six overnight without multiplying the coordination tax because the process and enforcement layer didn’t change.

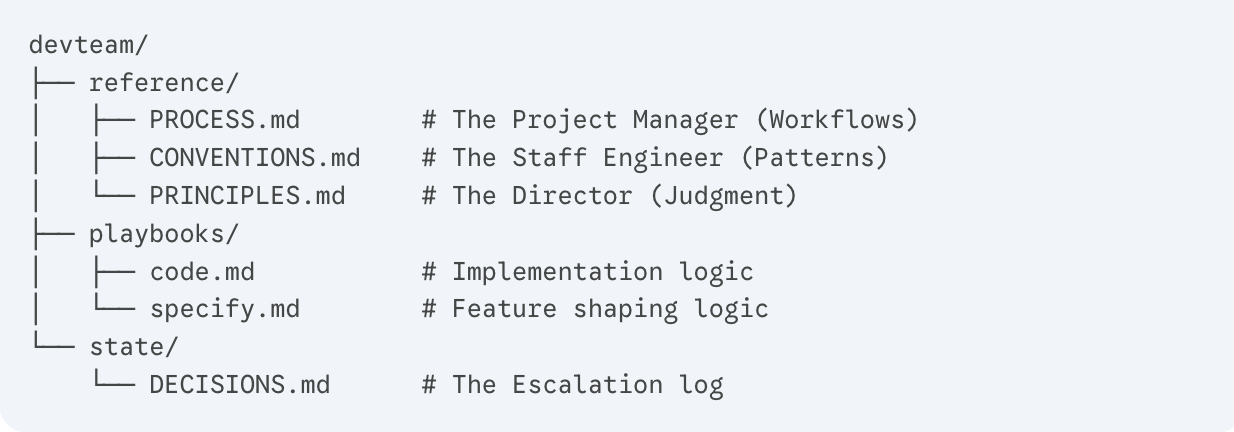

My `devteam/` folder is now the team’s operating manual. Linear is the team’s middle management: it tracks state, manages the queue, and provides the interface where I can see exactly what Astoria or Chicago are stuck on without needing a standup meeting.

Redefining teams in the AI era.

Looking back, everything I externalized fell into three buckets: process knowledge (how work flows), domain knowledge (what we know about this codebase), and evaluative judgment (how we decide what's good enough). Each one maps to a role you'd normally hire for.

That framework deserves its own post. I promised it last week - but first I want to show this isn't just a dev trick.

Next up: how the same framework runs my content team.